Map the Intelligence

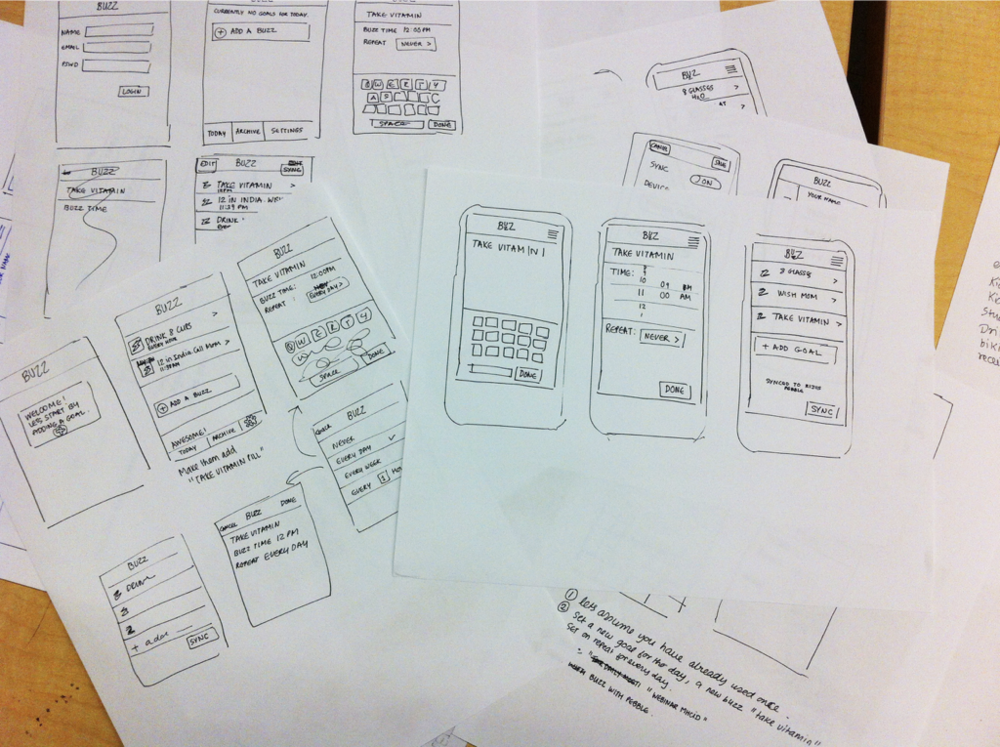

We start by understanding what AI does in your product and what humans need to see, control, and override. We map the decision boundaries — where the machine acts autonomously and where humans stay in the loop.

Every AI agent needs a human interface. We build AI-native products where intelligence is the core experience — not a bolt-on.

Get StartedHow we build

We start by understanding what AI does in your product and what humans need to see, control, and override. We map the decision boundaries — where the machine acts autonomously and where humans stay in the loop.

How do humans and AI collaborate in this product? We prototype the interaction patterns — copilot flows, approval mechanisms, feedback loops — and test them with real users before writing production code.

The minimum viable intelligence interface. We ship the first version that lets real users work with real AI — not a demo, not a prototype, but a product that earns trust through use.

The product gets smarter through use. We instrument how humans and AI actually work together, identify where the AI fails and where humans add friction, and iterate. Every cycle makes the cockpit tighter.

What we build

The core of every AI-native product. We design and build copilot interfaces that let AI assist, suggest, and act — while keeping humans in control. Custom-trained to your domain, integrated with your backend.

Run inference on the device itself — no round-trip to the cloud. We deploy models to iOS, Android, and edge hardware for real-time personalization, predictions, and adaptations that work offline.

Natural voice controls, speech-to-text, text-to-speech, and conversational flows. We build hands-free AI interfaces using Whisper, ElevenLabs, or your preferred stack — with fallbacks and analytics built in.

Embed on-demand image, video, and audio generation directly in your product. DALL·E, Veo, FLUX, Whisper and more — with moderation, provenance tracking, and seamless UX.

A boutique digital art marketplace built with an LA designer collective. iOS and web, featuring collaborations with Nicola Formichetti and events in NYC and LA.

IoT and embedded systems for the fashion tech company behind Lady Gaga's Flying Dress. Fiber optic LEDs, Bluetooth Low Energy, and app-controlled animations — presented at New York Fashion Week.

iPad interface for Apfel's automated storage towers - fully automated high-bay warehouses used in factories across Germany. Real-time inventory management and remote control of the tower's robotic extractor system.

A fast, lightweight macOS screen capture and recording tool built for teams. Zappy lets you instantly grab screenshots or record your screen, annotate and then share via link — all in just a few keystrokes. Deeply integrated with macOS, it supports customizable shortcuts, multi-display setups, and instant uploads.

Subscribe for exclusive tutorials, case studies, and best practices on building cutting-edge AI apps and APIs. Empower your team and stay ahead in the fast-paced world of AI development.